Unexpected findings (Chroneme)

- Zohaib Akhtar MD MPH

- 3 hours ago

- 2 min read

Unexpected findings this week. Most clinical AI models stall before they reach a second hospital. Epic's sepsis model is the textbook case. It's one of the most widely deployed clinical AI tools in the US, running in hundreds of hospitals. It's sold as a way to warn doctors hours before a patient develops sepsis.

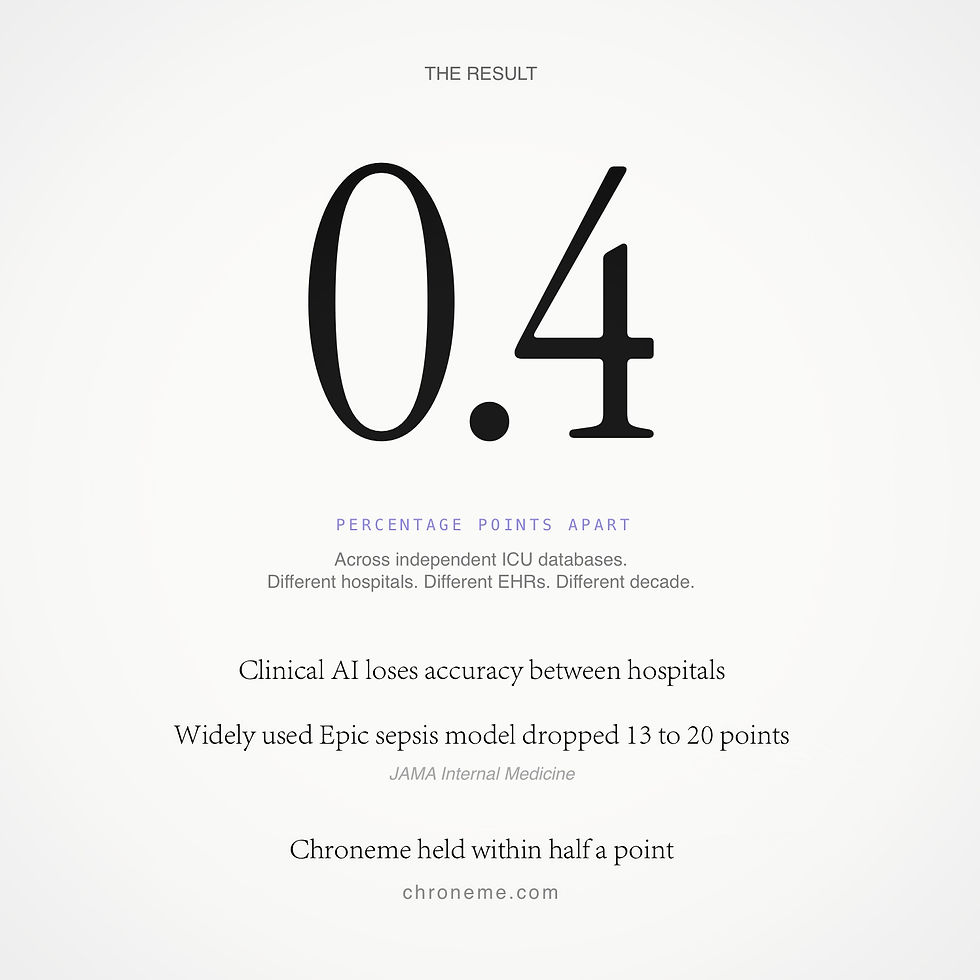

In 2021, a team at Michigan independently tested it and published the results in JAMA Internal Medicine. The model caught only a third of sepsis cases, flagged roughly one in five hospital stays, and dropped 13 to 20 points of accuracy compared to what Epic had reported.

This isn't unusual. The phenomenon has a name: cross-database collapse. A model learns how one site records its data and loses its edge the moment it sees a different one.

I built Chroneme on a different premise. A system that reads how a body actually changes over time, rather than how a hospital documents it, should be able to travel between hospitals. And it shouldn't be limited to one condition. Epic predicts sepsis. Chroneme reads trajectories, so the same engine flags any deterioration severe enough to require vasopressors, intubation, or dialysis.

I tested it on two massive, completely independent ICU databases. Different hospitals, different EHRs, a different decade. The results came in less than half a point apart. Cross-database parity this tight is rare in clinical AI, especially for a composite outcome measured before any intervention. The collapse isn't a law of nature. It's a property of how most models are built. Chroneme suggests there's another way.

A half-point gap matters because of what it removes from the equation. Most clinical AI has to be re-validated, re-tuned, sometimes re-trained at every new site. That cost is why good models stall. If a system holds its accuracy across hospitals, EHRs, and decades, the work of deployment changes shape. Regulators can evaluate it once. Health systems can build on it without rebuilding it. The ceiling on clinical AI has always been generalization. Chroneme suggests the ceiling moves.

If you work in clinical AI, tell me where this falls apart.

((Z))